Building a screen reader plugin with /text/speak Robot

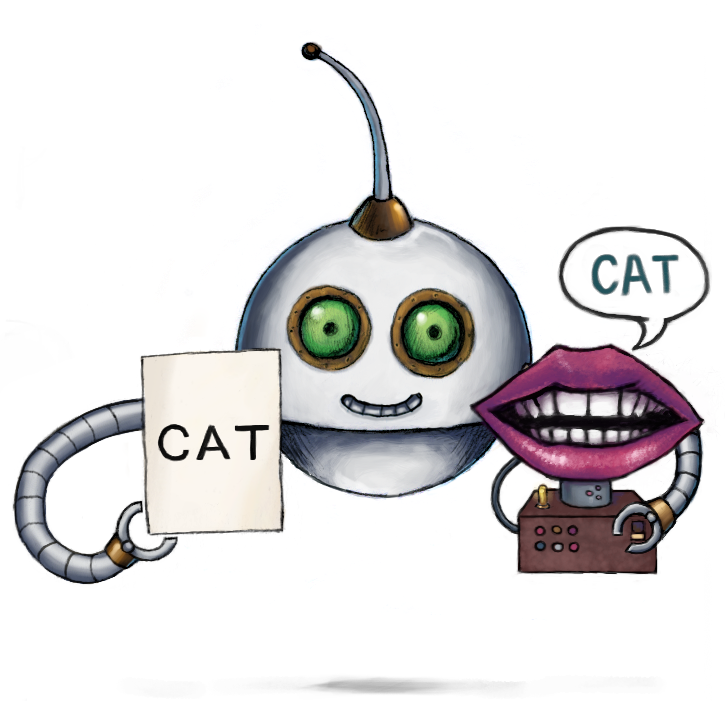

We recently discussed an update to one of our beloved AI Robots, and today, we're building on that momentum by adding another sibling to the AI Robot family. Say hi to our new /text/speak Robot! Like the last AI Robot we covered, the /speech/transcribe Robot, this Robot's key functionality surrounds speech. But instead of writing text from speech, it lets you process text to speech (TTS).

Since this is such an exciting addition, my co-worker Joseph and I decided we would both create a project with this Robot at the heart of the design and then create a blog covering our design process to show this Robot's versatility. So if you enjoy this blog, look out for Joseph's coming soon!

For my project, I'll create a screen reader that can be easily plugged into your website to convert an entire page worth of text into speech at the click of a button.

Prerequisites

Before getting started, you need to set up a standard website folder. Go ahead and create the

following files: index.html, index.js and, optionally, style.css. To not bloat this blog,

we'll only be discussing the contents of the js file and where to interface it with our HTML file.

Still, if you want to copy the entire contents of our other files, along with the loading animation

GIF that is used later on in this showcase, you can view the following

repository.

HTML

Let's get started by setting up the HTML element of our application. As specified above, we won't discuss creating a web page, just the necessary elements to interface with our JavaScript file. So go ahead and create a boilerplate HTML file with the following elements inside the body tag:

<button id="button_event" onclick="runScript()">Generate</button>

<div data-screenreaderlanguage="en-US">

<p>Sample Text</p>

</div>

<script src="index.js"></script>

This sets up the UI element of our application. The button we've created fires off our /text/speak Assembly request using the text contained within our div tag. To reference this div tag, we assign to it a dataset value that the back-end dev also uses to select the Robot's specified target language based on the options outlined in our docs. Lastly, we link our JavaScript file at the end of the body.

To interface with the Transloadit API, we'll be using Robodog, a slimmed-down version of our free file uploader Uppy.

Note: Robodog has been deprecated. For new integrations, use Uppy’s Transloadit plugin (Dashboard UI or a custom UI).

Within your HTML pages header, place the following line:

<script src="https://releases.transloadit.com/uppy/robodog/v1.10.7/robodog.min.js"></script>

JavaScript

Getting our text

Heading to our JavaScript file, we can now begin writing our program. The first thing we need to do is parse our website's readable text and assign it to a variable for later use.

[...] const result = document.querySelectorAll('[data-screenreaderlanguage]')

const textArray = []

result.forEach((e) => textArray.push(e.innerText))

const language = document.querySelector('[data-screenreaderlanguage]').dataset.screenreaderlanguage

[...]

In this code, we use the Document.querySelectorAll() method to create an array containing all the

information elements associated with the screenreaderlanguage dataset. We use a dataset, so if we

want to exclude any readable text from our screen reader output (such as text inside a <code>

tag), we can close our div tag before that unwanted text then create a new div tag under the same

dataset where we want our screenreader to continue processing text.

With that base information gathered, we still need to parse the readable text from our collected

data. So first, we initialize an array variable to store our readable text, before using the

forEach() method to loop through each element in our result array and saving each element's

innerText value.

Closing this section off, we set a language variable so our

/text/speak Robot knows our targeted language later in

our program. To do this, we declare our language variable with our dataset's screenreaderlanguage

value.

Converting for Uppy

With our data in place, we need to make it suitable for our Robodog instance to handle.

[...]

[...]

This line creates a new text file object that contains our previous text array.

Loading animation

In order to make users aware that there is processing going on, we want a loading animation to play while our /text/speak Robot is processing. To do this, we need to have some placeholder variables in place to replace our button element with a GIF. Then, if our text processing is successful, replace that GIF element with an audio player.

[...]

const buttonEl = document.getElementById('button_event')

const tmpGif = document.createElement('img')

const audioPlayer = document.createElement('AUDIO')

tmpGif.src = 'loader.gif'

tmpGif.width = 100

tmpGif.height = 100

tmpGif.id = 'tmpGif'

[...]

Here we have declared three variables to store elements of our HTML document; the first variable,

buttonEl, references an existing element, whereas the other two variables create new elements.

Below that, we assign our replacement GIF element several attributes. Finally, we set a source GIF

file from our working directory, set dimensions, and set an ID to refer to later in our program.

Firing script

With those preliminary Steps in place, we can now integrate the main functionality of our

program. Let's dive into creating the "generate" button script.

To get started, declare a function with the same name as the function we referenced earlier in our HTML page.

[...]

const mytextfile = new File([textArray], 'mytextfile.txt', { type: 'text/plain' })

[...]

With our function set up, we can use our first DOM manipulation method, parentNode.replaceChild().

This replaces our pages button with the new GIF element when our function is executed.

Now that the GIF is in place, signifying that data is being processed, we can now use the Transloadit API to synthesize the speech for our program. To do this, we use the Robodog script that we imported into our HTML file.

[...]

const Uppy = window.Robodog.upload([mytextfile], {

waitForEncoding: true,

params: {

auth: { key: 'TRANSLOADIT_AUTH_KEY' },

steps: {

':original': {

robot: '/upload/handle',

},

speach: {

use: ':original',

robot: '/text/speak',

provider: 'aws',

target_language: language,

},

},

},

})

.then((bundle) => {

[...]

In our new Robodog instance, there are two parameters we need to set up. The first is for our uploaded file, which we declare as the text file variable that we created earlier in our program. The other parameter is an object containing all other additional options.

In our object parameter, we need to set up a few Steps. First, waitForEncoding needs to

be set to true so we can call our Assembly results later on in our program. Next, we

also need to insert our authentication key into auth parameter. This key can be found under your

Transloadit console's Credentials tab. Lastly then, we need to insert our Template

Steps: one for uploading and the other for the actual speech transcription.

A few parameters make up the speech Step. First, we must inform this Step that

we want to use the text file we uploaded; this is accomplished with the "use" parameter. Next, we

need to specify using the "robot" parameter to use our

/text/speak Robot. The following parameter "provider"

lets us decide the backend API of the speech transcription. The available values are "gcp" and

"aws". Each provider has a variety of different voices, but we'll just be sticking with the default.

The final parameter we'll use, "target language", tells our API in which language we want our text

pronounced. This value comes from the language variable we set up at the beginning of our program.

Once this functionality is in place, we use JavaScript's then() method to return a promise

containing our speech data so we can refer to it later on.

Using the result

With Robodog all setup, we can now store the resulted speech so we can later play it from the browser.

[...]

const audio_url = bundle.results[0].url

audioPlayer.setAttribute('src', audio_url)

audioPlayer.setAttribute('controls', 'controls')

audioPlayer.setAttribute('autoplay', 'autoplay')

audioPlayer.setAttribute('style', 'width: 100%;')

tmpGif.parentNode.replaceChild(audioPlayer, tmpGif)

})

[...]

Inside our then() method, using the promise provided by Robodog, we can parse the

Assembly's JSON results and save the result URL to a new variable. With this new

variable, audio_url, we can use the same method for replacing elements that we used earlier in

this showcase. Then, to get the audio player up and running, we set some attributes that provide the

audio's source, tweak some control options, and instruct for the audio to begin playing as soon as

the element has loaded. With that all in place, a nifty screen reader has now been generated and

integrated into your website!

We can't forget about having a failsafe option, though. Here's where we use JavaScript's catch()

method to handle any errors.

[...]

console.error({ screenreaderErr })

tmpGif.parentNode.replaceChild(buttonEl, tmpGif)

})

}

[...]

Any errors that may occur will be posted to the console, and our processing GIF will be swapped back for the original button element. This means if you were to momentarily lose a network connection, but then regain it, you could click the generate button for the speech generation to unfold again.

And with that, this showcase comes to an end! We hope you agree it has further demonstrated the sheer versatility of our API. Of course, you can simply add the program we created to any website for a quick and easy screen reader – but don't just stop there! Instead, use this blog as a set of building blocks to expand your projects with, and be sure to let us know about the results :) Our /text/speak Robot is available to all our paying customers, so you may want to consider upgrading your account if this blog was of interest to you. Our first paid tier comes in at $49/mo with 10GB of encoding data!