Building an alt-text to speech generator with Transloadit

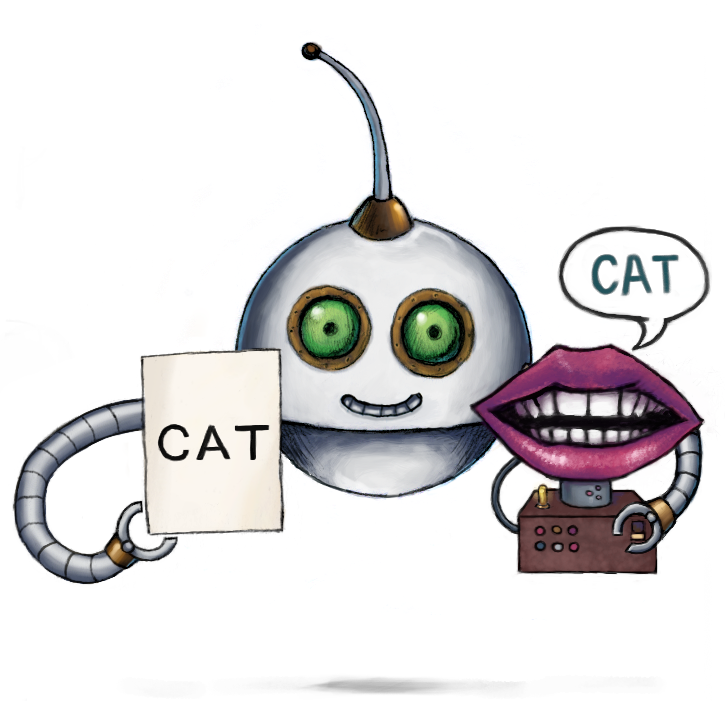

Its been a while since we showcased the /text/speak Robot on a let's build. So today, we're going to show off some of its potential by building an automatic alt text to speech image generator. The focus of today's project is combining our Robots in an interesting way to ultimately improve the accessibility of your project.

Suppose you find this blog interesting and decide to take its practices even further; you could check out Charlie's article to create a screenreader website plugin using the Transloadit API, as well as other AI posts.

Getting started

During this blog, we'll examine how we can use the /image/describe Robot in series with the /text/speak Robot to describe the contents of an image that can be served as a short audio clip to your website users.

To present our Assembly result outputs, we'll look at using the /file/serve Robot. This helps keep data consumption lower using the global Transloadit CDN.

Importing our image

The first thing that needs doing is to import our input image from an S3 bucket (or your preferred service, you could even just fetch an input file over HTTPS if it has a publicly accessible URL).

{

"steps": {

"import": {

"robot": "/s3/import",

"credentials": "s3defaultBucket",

"path": "default/${fields.input}"

}

}

}

Using Assembly Variables in our Template Step, we import

fields.input This gives us the functionality to import an image from a URL path. For example, for

https://my-app.tlcdn.com/describe-image/items.jpg, fields.input will read items.jpg, and this

is the path that would be retrieved from your S3 bucket.

Describing the image

In our next step, we use the /image/describe Robot to create a JSON list of all the items described inside our image.

{

"steps": {

...

"described": {

"use": "import",

"robot": "/image/describe",

"provider": "gcp",

"format": "json",

"granularity": "list",

"result": true

},

}

}

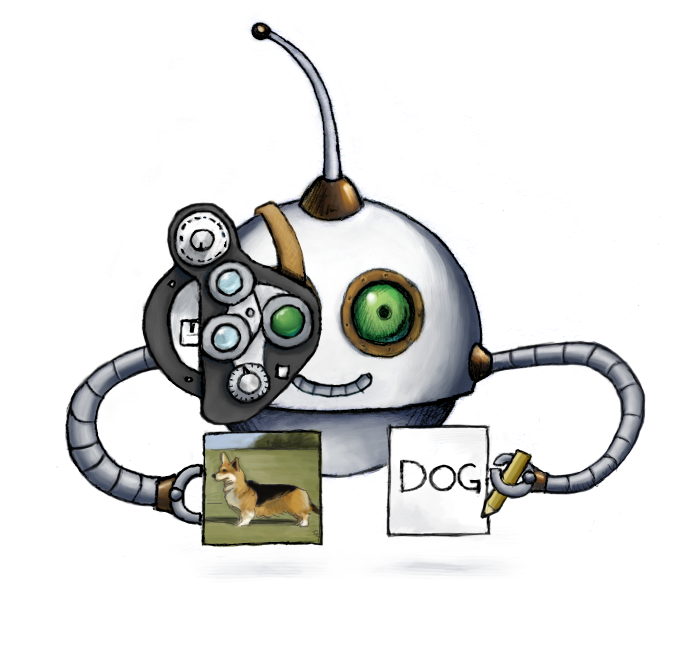

Providing this image:

Will give us this result:

[

"Glasses",

"Photograph",

"White",

"Light",

"Product",

"Black",

"Eyewear",

"Luggage and bags",

"Everyday carry",

"Bag"

]

While this list isn't perfectly accurate, it provides a good list of items from the image. In this

case we're leveraging Google Cloud's API to deliver AI, but you could switch to AWS by just changing

"provider" to "aws", and this would get you a different result set. Note that these AIs are

continously improving, and as such, the results could change if you run the same instructions a

month from now.

Creating an audio description

Our penultimate step generates an audio file from our image description using our /text/speak Robot.

"steps": {

...

"speak_description": {

"use": "described",

"robot": "/text/speak",

"provider": "gcp",

"result": true

}

}

}

Serving the result on the web

Finally, we need to serve the audio file using Transloadit's CDN. This means our results can be easily accessible anywhere on the web. Additionally, it's not only faster but also cheaper, so please check it out.

{

"steps": {

...

"serve": {

"use": "speak_description",

"robot": "/file/serve"

}

}

}

Combining all of our Steps, the full Template now reads:

{

"steps": {

"import": {

"robot": "/s3/import",

"credentials": "s3defaultBucket",

"path": "default/${fields.input}"

},

"described": {

"use": "import",

"robot": "/image/describe",

"provider": "gcp",

"format": "json",

"granularity": "list",

"result": true

},

"speak_description": {

"use": "described",

"robot": "/text/speak",

"provider": "gcp",

"result": true

},

"serve": {

"use": "speak_description",

"robot": "/file/serve"

}

}

}

Results

If all goes well, you should end up with a result like this:

Perhaps what's most exciting is that these results aren't stored locally on the website but instead are being generated, stored, and served on the fly. Try switching the image, and see how the image description automatically changes with it!

Instead of adding an audio control as in this example, we could also add the decriptions of the

image, to the image tag's alt and title attributes. Any image without alternative text now can

be automatically described for people with impaired vision, as well as providing better clues as to

what the content is about to any search crawler or machine.

If you're interested in seeing an example implementation on how to do this, please feel free to reach out to us!

We hope to see you use this new /text/speak Robot in your Transloadit projects. It's available to everyone on the Startup plan and up, so if you're on the Community plan, maybe consider making the switch so you can bring your new project to life. 🌱